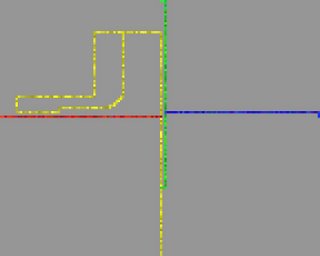

I have made a small amount of progress with the lasers wars music game. In addition to the features already listed in the first post on this subject, the game now has support for four players (blue, red, yellow and green) and a first and second tier sound mapping.

The first tier is made of data concerning only one player whilst the second tier is made of data from two players to two parameters of the same event (a joint sound, so to speak). Currently, the first tier of data is controlling sinewave frequency and spacial movement, and a cross pollination of information from one player to another is controlling the four tempi. The second tier is controlling the delay lines (feedback and delay time parameters).

There will be at least four tiers of data mapping of various types and methods, each of a different number of components, although I anticipate the fourth tier to only have the one component, or instance, of mapping.

After all this blah, blah blah-ing, here are some examples. The pictures show the final state of play for each aural example. It is quite difficult to control four lasers at once! Needless to say, these examples come from a prototype of a prototype, so yeah, it's nowhere near finished. Or something like that.

Saturday, April 29, 2006

Subscribe to:

Post Comments (Atom)

0 comments:

Post a Comment